Introduction

From time immemorial, human life has centered around three tenets.

- Innovation to simplify daily activities

- Education to teach one’s peers about different activities

- Teamwork to successfully accomplish their tasks

One aspect controls all three tenets binding them seamlessly together – Communication. Right from the hunter-gatherer tribes hunting in packs to modern human beings building spaceships, communication has turned out to be the key differentiator between success and failure.

Language sits at the core of any communication activity. Imagine a world without language, and then you might grasp the reality of how difficult it would be to do the most basic of tasks. Also, language is the one aspect every human being is trained in right from birth. Thus, it’s safe to assume language drives the world forward.

If language has such a lasting impact on everyone’s life, why not use it with machines? Think about a system where anyone without any prior training in how it works, can give commands, execute tasks, and achieve their goals. It’s only possible by introducing language as an interface. This one thought process is behind the rise of Conversational AI (CAI).

Given language’s ubiquity, few areas of technology will have a more far-reaching impact on society in the years ahead – Forbes

However, the ride to simplify tasks with human language is a bumpy one and it comes with its fair share of challenges.

Before I start to discuss the roadblocks, I would like to introduce a concept called Natural Language Understanding.

What is Natural Language Understanding and Why is it Challenging?

Natural Language Understanding stylized as NLU is the ability of computers to understand human language as it’s spoken or typed and respond back in the same language. It’s a branch of AI that uses algorithms to convert human speech (both text and voice) into a structured ontology, which is a data model containing semantics and pragmatics definitions. There are two key aspects of any system employing NLU.

- Intent Recognition: Deals with the ability of the system to understand the human sentiment in the input speech or text for understanding their requirement. In very simple terms, intent recognition helps set the “context” of the communication

- Entity Recognition: Helps the NLU system identify and group entities in a speech or text as named entities and numerical entities. Named entities are further broken down into person names, location names, organization names, etc.

Building machines that can understand language has thus been a central goal of the field of artificial intelligence dating back to its earliest days – Forbes

If the system fails to process the input correctly, the result will be unwanted and irrelevant. The whole exercise will become counterproductive.

The nature of human language which has multiple meanings for the same word based on context and other complexities is a prime reason for increasing the difficulty for machines to understand and assimilate the human language.

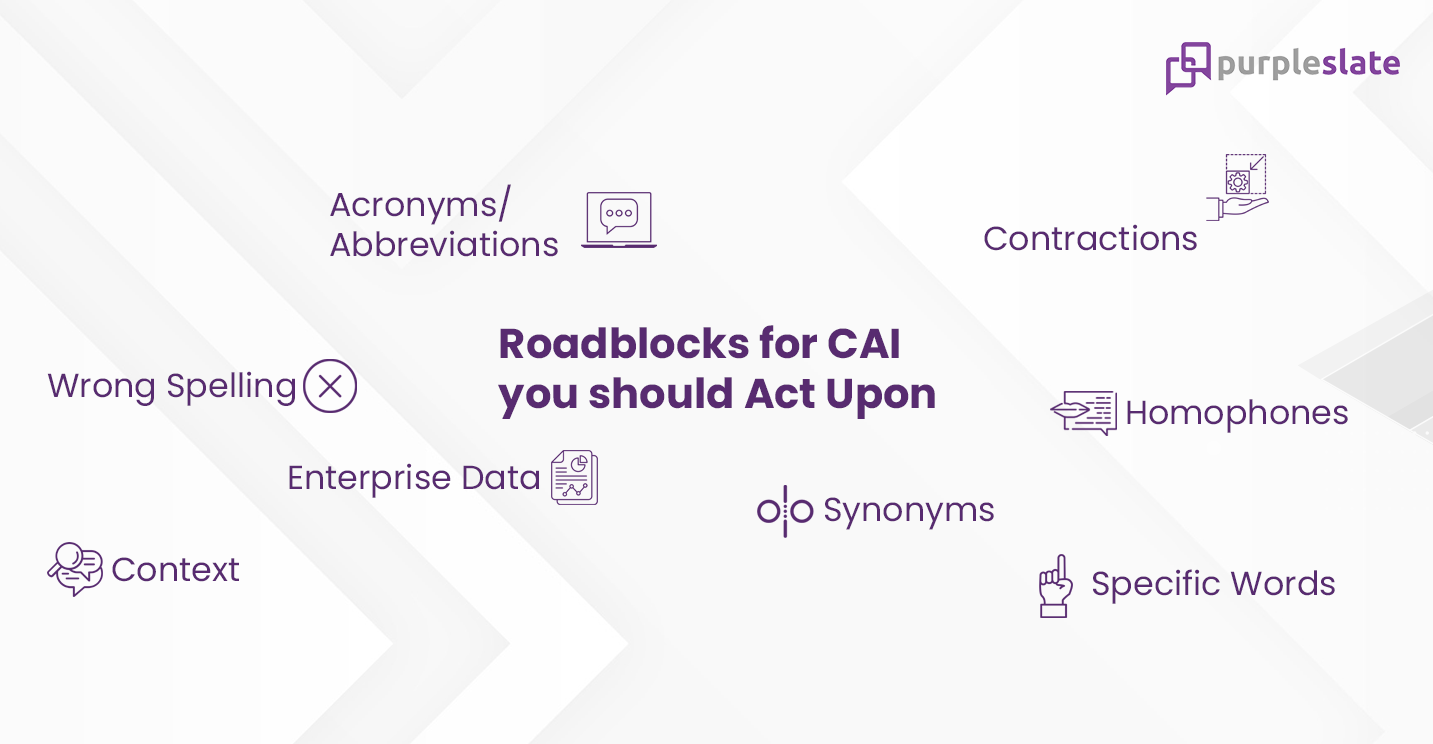

Top Challenges Faced by Every Conversational AI Solution

A deep understanding of human language requires understanding the meaning of words and how they are used to represent the intended message. Humans are intrinsically capable enough to understand the rules, and culture associated with a language. But, for a machine, the ambiguity and imprecise characteristics of natural language are what make language understanding by AI systems that much more difficult. Every Conversational AI solution needs to address these roadblocks to ensure a seamless delivery of insights

Acronyms/Abbreviations

Acronyms are abbreviations formed using the initial letters of a phrase or a sentence. NASA, FLAG, US Navy SEAL, etc. are some common examples of acronyms. The underlying problem with acronyms is that some of them are spelled and pronounced similarly to existing words in the language. Navy SEALs are US elite naval special forces unit and it’s an acronym for Sea, Air, Land. However, the CAI system equates the acronym SEAL to the aquatic animal Seal.

Contractions

Contractions came out of the effort to simplify typing language, especially where there’s a restriction of character counts like Twitter. So, replacing “You” with ‘“U”, “Please” with “Pls”, “Account” with “acct”, and “got you” with “gotcha” became common practice. CAI systems struggle with understanding the intent of the user here as these terms are not part of the vocabulary.

Homophones

Homophones are similar sounding words like “Year” & “Ear”, “Their” & “There”, “Due” & “Dew”, etc. Homophones are not a challenge with textual inputs but become a major one with voice inputs. Factor into this equation different accents and colloquial pronunciations. At times even humans need to lean in and listen twice to understand the meaning, so think about the plight of the CAI.

Context

Consider the sentence, “The amount of armaments carried by a Tomahawk Attack Helicopter is two times that of a Tiger T2 Battle Tank.” The term “amount” signifies the number of armaments. Look at the sentence, “The amount of propane in the cylinder is 12 pounds.” The same term “amount” signifies volume here. One term can change its meaning based on context and that is a problem area for Conversational AI.

Enterprise Data

In my organization, I call my customer a client while in another organization the customer is referred to as an account. Both words represent the same meaning but are used differently in two enterprise data stores. Conversational AI solutions face these issues on a daily basis.

Synonyms

Extent, span, reach, length and range. These are all synonyms of the word distance. The range of a microphone basically signifies the effective distance or radius in which it works. Synonyms won’t be understood by CAI if it lacks an understanding of context.

Specific Words

Multiple myeloma, cerebral hemorrhage, epinephrine, and cardiothoracic surgery, these terms are commonly found across the database of a multispecialty hospital. Such words are not part of the everyday vocabulary and it has got a niche usage with very specific meanings. Conversational AI platforms that are not subjected to these terms do not understand their meaning or implications just like how humans who aren’t medical professionals do not.

Wrong Spelling

It is common to have spelling errors when we’re typing. This is specifically evident when typing on a smartphone. Humans can understand that Chronc Hart Disise means Chronic Heart Disease, but for CAI, it’s first instinct will be to throw out an error.

The Magic Behind Conversational AI-Language Understanding

I have shown the reality of how it’s an uphill battle for Conversational AI platforms to understand the user’s question and respond with the correct answer. The problems faced by the platform are not alien to us as we also face them every day. So, how does Conversational AI address them?

That’s exactly what I answer through the article Diving Deeper into the World of Domain-Specific Vocabulary